Despite the growing strategic importance of these centers, most discussions on AI governance still emphasize model behavior, ethical issues, and application-specific regulations, while neglecting the security of the infrastructure that supports these systems’ operational functionality.

This paper identifies four primary threats to AI data centers: model compromise and theft, data poisoning and process-line attacks, infrastructure disruption through cyber-physical interactions, and supply chain and firmware vulnerabilities. Research findings suggest that these apprehensions could trigger cascading effects across diverse sectors and geographical regions.

The AI Infrastructure Cyber Shield is proposed as a holistic protective mechanism. This system incorporates methodologies for securing physical locations, cloud computing platforms, operating systems, and organizations, alongside the protection of models and data. Furthermore, it integrates governance frameworks at both national and international levels. This investigation contributes to the field of AI Infrastructure Cybersecurity by recognizing AI data centers as critical infrastructure and promoting a collaborative security strategy among government bodies and technology companies. This framework offers a policy-oriented approach designed to mitigate the systemic risks inherent in artificial intelligence.

Introduction

Artificial intelligence has moved beyond research labs and specialized digital projects. It’s now a crucial part of decision-making in various industries, including finance, healthcare, transportation, energy, and defense. [1]

Simultaneously, the swift progress of artificial intelligence models and their functionalities has amplified the significance of the infrastructure essential for their training and operational deployment.

This includes, in particular, high-performance computing clusters and data centers designed specifically for AI. [2]

But most of the academic and political talk is still about algorithmic bias, system integrity, and ethical governance frameworks. Not much is said about the digital and physical infrastructure that makes these systems work and keeps them running.

AI data centers, distinguished by their considerable computational power and the ability to store proprietary core models and sensitive datasets within intricately linked infrastructures, constitute a concentration of technological influence. Although the focus on efficiency and innovation is advantageous, it simultaneously engenders particular structural vulnerabilities.

As a result, these facilities become attractive targets for cybercriminals and state-sponsored groups. These groups may seek to engage in digital espionage, steal intellectual property, or strategically disrupt the global digital infrastructure. [3]

A successful attack on such a facility could potentially lead to the release of models or the compromise of training processes. This, in turn, could cause widespread service disruptions across industries that rely on AI systems. [4]

Given the global digital system’s dependence on a limited number of cloud computing and AI service providers, a security breach at a critical facility could generate cascading effects that transcend industry boundaries and national borders. This underscores the inherent vulnerability of the global digital infrastructure. [5]

Even though the hazards are getting worse, there isn’t much research interest in AI infrastructure security. Most research on AI safety looks at how models behave and how reliable they are. On the other hand, critical infrastructure studies don’t often look at AI data centers as a separate area that needs special protection. [6] This study tries to fill a gap in research by answering two important questions: Why have AI data centers become high-value cyber targets, and who is in charge of keeping them safe? To do this, the report looks at how important these centers are to the global digital ecosystem and the most common ways that threats attack them, such as stealing models, poisoning data, disrupting infrastructure, and breaking into supply chains. The research also creates a number of possible assault scenarios to see how they would affect the system as a whole.

In this case, the study suggests a multi-layered protective system termed the Cyber Shield for AI Infrastructure, along with a set of useful policy suggestions. The study uses a number of different theoretical frameworks, such as critical infrastructure theory, AI security studies, and digital geopolitics techniques. This makes AI data centers stand out as a unique and important topic of study in modern cybersecurity.

Methodology

This study uses a qualitative strategic analysis to examine the vulnerabilities within artificial intelligence (AI) infrastructure and to assess the effectiveness of current regulations and policies. The research is organized into four main analytical stages.

First, a systematic review of the existing research is conducted, concentrating on critical infrastructure protection (CIP) and the systemic risks present in complex digital ecosystems. This initial phase establishes the theoretical basis for understanding the relationship between centralized technological assets and national security.

The subsequent phase of this research involves a comparative policy analysis, focusing on several significant jurisdictions. This analysis includes an examination of the United Kingdom’s Critical National Infrastructure (CNI) protocols, the European Union’s NIS2 Directive and Artificial Intelligence Act, and the regulatory framework governing digital infrastructure security within the United States.

The research also creates a classification system for cyber threats specifically related to AI. This part of the study categorizes different attack methods and weaknesses that are unique to machine learning environments.

This classification is based on a review of existing security taxonomies.

Fourth, the study employs scenario planning to look into potential adversarial targeting of AI data centers. These prospective models analyze the cascading impacts such disruptions would impose across vital socio-economic sectors.

Ultimately, this research adopts a normative, policy-oriented orientation. This analysis aims to identify emerging security challenges and suggest strong strategic solutions. Instead of using quantitative models, the study focuses on creating broad regulatory frameworks designed to strengthen the resilience of national digital assets.

Conceptual framework

Definition of AI data centers

AI data centers differ fundamentally from traditional server facilities. They are designed specifically for training, deploying, and maintaining large-scale AI systems. These facilities use a high-density computing architecture. This architecture includes integrated groups of computing accelerators, such as graphics processing units (GPUs), dedicated AI processing units (TPUs), and other specialized chips. These components are connected by high-bandwidth networks and supported by large storage systems that can handle the huge amounts of data used for training and inference. [7]

These centers do not simply host applications or store data; they are designed and optimized to meet the demands of continuous, intensive computing, including large-scale array processing, low-latency inference operations, and, in many cases, the continuous updating and optimization of models. [8] In this context, major cloud computing companies have operated dedicated AI computing clusters, sometimes referred to as super-clusters or AI factories, which house tens of thousands of computing accelerators within a single technology campus or defined geographic area. [9] However, the strategic importance of these centers extends beyond their physical components to the intangible assets they house. These facilities contain high-value proprietary core models, specialized AI systems in sectors such as finance and healthcare, and carefully managed datasets and training systems. [10] For both businesses and governments, these centers have become an increasingly important form of strategic infrastructure.

Several countries have initiated national AI computing centers or sovereign AI cloud initiatives to ensure local access to advanced computing resources and enhance their technological independence. [11] As a result, AI data centers are strategically important because they connect three key areas: industrial competitiveness, digital sovereignty, and national security policies. [12]

Differences between AI data centers and traditional data centers

The distinctions between AI data centers and conventional facilities encompass more than just technical specifications; they also involve security and operational factors. A key differentiator is the substantial computational demand inherent in AI systems. Large-scale AI computing clusters can consume hundreds of megawatts of electrical power, thereby requiring improved connectivity to power grids, sophisticated cooling mechanisms, and close integration with regional energy infrastructures. [13]

This interdependence implies that any disruption to power distribution or building management systems can precipitate immediate and severe consequences for AI operations. [14] Furthermore, at the software level, AI environments exhibit a greater degree of complexity compared to traditional systems.

They rely on multiple layers of technical frameworks, including distributed training libraries, GPU scheduling and coordination tools, model registers, and model lifecycle management (MLOPs). Each of these components can potentially introduce a vulnerability within the security framework. Unsecured application programming interfaces, inadequate access control management, and dependence on compromised external libraries, for instance, can create indirect routes to critical systems. [15] In these complex environments, security breaches often don’t stem from a single flaw. Instead, they usually result from the interaction of several vulnerabilities across different system layers.

Furthermore, a key attribute of AI data centers is the dynamic character of model-related workloads. Models undergo periodic updates, are fine-tuned for specific applications, and are integrated with external data sources and tools. Although this flexibility improves performance and adaptability, it simultaneously broadens the attack surface for cyber threats.

Consequently, techniques such as data poisoning, prompt injection, and targeted model manipulation become more real threats in AI environments than in traditional hosting environments. [16]

Many AI data centers also operate on a multi-tenant model, where the infrastructure is shared among multiple organizations or users. Although this model demonstrates considerable economic efficiency, it also introduces novel security vulnerabilities. These include the potential for data breaches between different tenants, the exploitation of backchannels on shared hardware, and the unauthorized extraction of models through shared computational resources. [17]

Furthermore, these facilities are now increasingly governed by complex regulatory and governance structures concerning AI risk management, safety protocols, and adherence to regulations. As a result, the consequences of any security breach could extend beyond immediate technical failures, potentially encompassing severe regulatory actions, reputational damage, and, in certain instances, geopolitical conflicts. [18]

2. The strategic importance of AI data centers

2.1 Economic value

Investment in AI data centers has exploded lately. Cloud companies, chipmakers, and governments are pouring money into these facilities, all to get the computing power needed for training massive models and offering generative AI services widely. [19]

Within the current decade, global capital spending on AI data infrastructure is projected to surpass several hundred billion dollars, driven by the surging need for GPU-intensive computing clusters, high-speed networks, and advanced power and cooling systems. [20]

These facilities constitute not only considerable physical investments but also function as centers for the aggregation of high-value intangible digital assets, including proprietary models, meticulously curated datasets, and intricate software pipelines crucial for the development and operation of intelligent systems. [21] As AI emerges as a crucial catalyst for productivity, innovation, and competitive advantage in international markets, access to substantial computing resources is evolving into a critical source of both economic and strategic influence. [22] Organizations that control expansive AI infrastructure possess the capacity to cultivate comprehensive innovation ecosystems centered on their platforms, which can include startups, research initiatives, and diverse enterprise services. Likewise, nations that possess considerable AI computing capabilities are striving to position themselves as regional or global centers for advanced technological development. [23]

Conversely, the concentration of financial resources and technical expertise simultaneously creates greater security vulnerabilities. The economic advantages of AI data centers also make them attractive targets for cyber espionage, ransomware, and digital sabotage. This is especially true in a regulatory and security environment that hasn’t fully addressed the potential risks associated with this infrastructure. [24]

2.2 Its role in national security

Artificial intelligence (AI) systems are no longer marginal tools within national security frameworks; they have become integral to core defense and intelligence functions. Many intelligence assessments, cyber defense, logistical coordination, and operational planning processes rely on AI-powered analytical systems. [25]

Military and security institutions are increasingly relying on both commercial cloud computing platforms and sovereign AI environments to train and deploy models capable of analyzing satellite imagery, processing open-source intelligence, and interpreting cyber threat data. [26] In practice, data centers hosting these capabilities function as an integral part of the national security infrastructure, even when these facilities are owned and operated by private companies. [27]

AI’s significance extends beyond direct military applications, as it is essential for maintaining the stability of several critical sectors. Power grids, water systems, and financial infrastructures increasingly depend on AI-driven analytical tools for anomaly detection, predictive maintenance, and the continuous monitoring of operational risks. [28] Disruptions or compromises to the data centers that underpin these systems have consequences that extend beyond mere service interruptions. These occurrences can lead to a degradation of a state’s operational capabilities and its capacity to recognize evolving threats, thus impairing its ability to mount effective responses to simultaneous cyber or physical assaults. [29] As a result, for adversaries seeking to gain strategic benefits without resorting to direct military engagement, the targeting of AI infrastructure offers a potent means of simultaneously compromising multiple layers of defense systems. [30]

2.3 The reliance of various sectors on artificial intelligence

The reliance on AI data centers is no longer confined to the technology sector; it has extended to most major economic sectors. In the financial sector, machine learning systems form the basis for a number of vital processes, such as fraud detection, anti-money laundering compliance, credit risk assessment, and high-frequency trading strategies. [31]

In the healthcare sector, medical institutions have begun deploying AI-powered clinical language models and medical image analysis systems to support diagnostic processes, treatment decision-making, and operational efficiency. These systems are often run on cloud platforms rather than on-premises infrastructure. [32]

In logistics and manufacturing, AI is used to support predictive maintenance, demand forecasting, and transportation route optimization, while transportation authorities are experimenting with AI-powered traffic management systems and autonomous mobility technologies. [33]

Digital platforms are among the most reliant on large-scale AI infrastructure. Search engines, social networks, and e-commerce platforms rely on recommendation systems, ranking algorithms, and generative models to personalize content for users, manage digital interaction, and maintain high levels of engagement. [34] Much of this activity is concentrated within a relatively limited number of global AI computing regions operated by a small number of service providers. [35] This concentration creates widespread structural dependence, such that any disruption in one of the main AI data centers may not remain an isolated incident, but may extend its impact across multiple sectors and different national borders, producing effects similar to those that may result from the disruption of global payment networks or critical energy infrastructure. [36]

3. Cyber threats to AI data centers

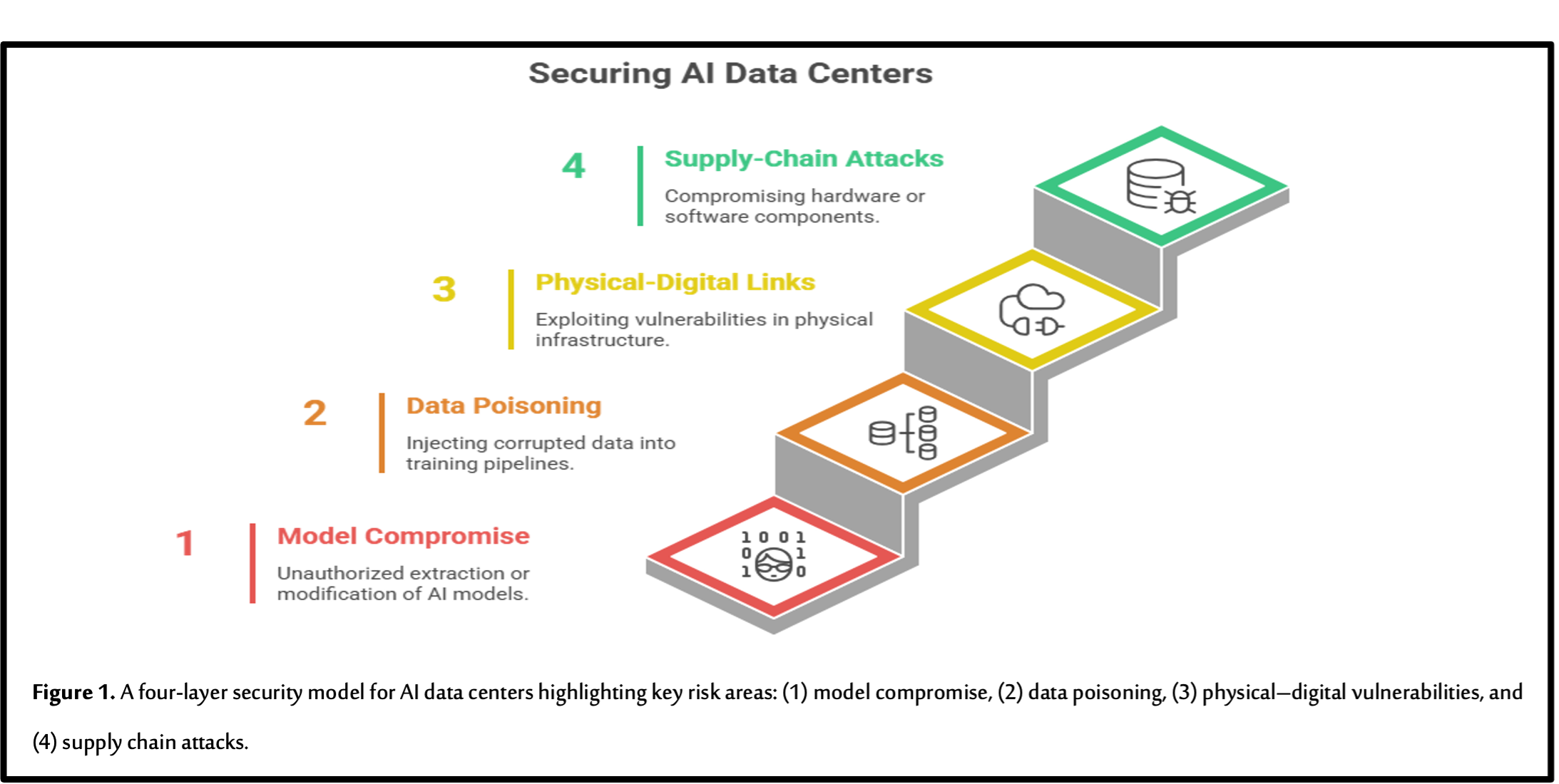

AI data centers are part of the critical infrastructure that stores, processes, and trains large-scale machine learning models, making them attractive targets for cyberattacks. As Figure 1 illustrates, threats targeting these centers can originate across multiple layers of the AI ecosystem, impacting both the digital and physical components of the infrastructure.

3.1 Model compromise

Model compromise involves the unauthorized acquisition of proprietary models, along with the covert modification of their parameters or operational settings. Core models of significant value, as well as those tailored to specific niches, represent considerable investments in data, computational power, and specialized engineering expertise. Therefore, if malicious actors gain access to model weights, they can replicate the same technological capabilities, circumvent the commercial licensing agreements associated with these models, or employ them for hostile or competitive purposes.

Common attack vectors in this domain include misconfigured access controls, vulnerabilities within application programming interfaces (APIs), and unauthorized insider access to the storage and backup systems that contain the models.

The threat extends beyond model theft; adversaries may also implant backdoors or malicious behaviors into a model during training, fine-tuning, or operational deployment. Because many AI systems are difficult to understand and are often evaluated based on overall performance, these changes might not be noticed for a long time. This is especially true when the systems are designed to activate only under specific conditions or stimuli. [37]

In important areas like finding unusual patterns in industrial systems, supporting clinical decisions in healthcare, and analyzing threats in cybersecurity, these subtle breaches can have serious consequences. They can undermine both the operational reliability and the strategic security of AI systems.

3.2 Data poisoning and pipeline attacks

Data poisoning is a well-known threat in machine learning security, but it is particularly significant in AI data centers that rely on continuous learning or receive massive amounts of external data. [38] Studies have shown that even relatively small proportions of contaminated data within training sets can lead to significant degradation of model performance or targeted classification errors, even in large-scale models.

For example, language models used in clinical medical applications have demonstrated susceptibility to contaminated medical datasets, potentially resulting in unsafe or inaccurate treatment recommendations in specific situations.

Attackers can exploit multiple points along the data processing chain, including:

- Web-based pre-training datasets

- Domain-specific databases

- User feedback loops

- Data labeling and labeling processes

Furthermore, compromised data sources, such as external data providers or poorly configured data entry points, can allow the injection of malicious samples into central data repositories or training lines. Because AI data centers often host shared processing lines that support multiple models and multiple users, the success of a single poisoning attack can spread rapidly across multiple applications, leading to widespread effects before any signs of vulnerability become apparent. [39]

3.3 Disrupting processes through the interconnection between physical and digital systems

Digital infrastructure faces significant risks from traditional cyber threats, including distributed denial-of-service (DDoS) attacks, ransomware, and the exploitation of software vulnerabilities. The consequences of these threats are amplified within AI data centers, given the inherent link between digital systems and the physical demands of these facilities. [40]

Because of the high energy use and strict temperature controls, even small problems with building management systems, power distribution units, or cooling systems can automatically shut things down. These shutdowns are meant to protect sensitive equipment.

Furthermore, attackers have begun to investigate vulnerabilities in industrial operating (OT) technologies and industrial control (ICS) systems related to data center infrastructure and power, thus establishing a potentially disruptive attack vector. [41]

Attacks targeting software that orchestrates platforms, manages cluster scheduling, or handles configuration management can disrupt training and inference processes, even without directly damaging the hardware.

Furthermore, ransomware attacks can encrypt critical components such as checkpoints, configuration files, or vital process management databases, thereby hindering recovery efforts and precipitating significant operational difficulties. [42]

Given the elevated sensitivity of artificial intelligence workloads to latency and their considerable computational requirements, even minor performance degradations within network or storage systems can engender severe repercussions. Consequently, these performance deficiencies may lead to frequent violations of service-level agreements (SLAs) and substantial disruptions to digital services.

3.4 Supply chain and firmware attacks

AI data centers are dependent on intricate supply chains that integrate hardware, software, and firmware elements. Essential hardware, including GPUs, network interface cards, motherboard management units (BMCs), and smart power systems, presents vulnerabilities that can be exploited via malicious firmware updates or components pre-infected with malware. [43]

Concurrently, software supply chain attacks, which target AI frameworks, software libraries, and container images, are increasingly concerning, particularly considering the prevalent utilization of open-source libraries across numerous systems.

Recent security guidelines for AI systems and large linguistic models highlight additional risks associated with compromised models, insecure plugins, and undocumented external connectors that link AI systems to external data sources and tools.

In large-scale data centers, where running thousands of nodes and services requires continuous updates, ensuring firmware integrity, verifying the source code, and securing reliable software build lines presents a significant operational challenge. [44] If a supply chain breach is successful, attackers can completely bypass edge protection mechanisms and gain deep, continuous access to critical AI infrastructure for extended periods of time.

4. Attack scenarios and potential impacts

As operational organizations and critical infrastructure increasingly rely on AI systems, the diversity and complexity of potential attack scenarios are likely to grow. The unique vulnerabilities of the AI environment stem from the interplay between data processing lines, complex models, distributed computing, and the physical components of the infrastructure.

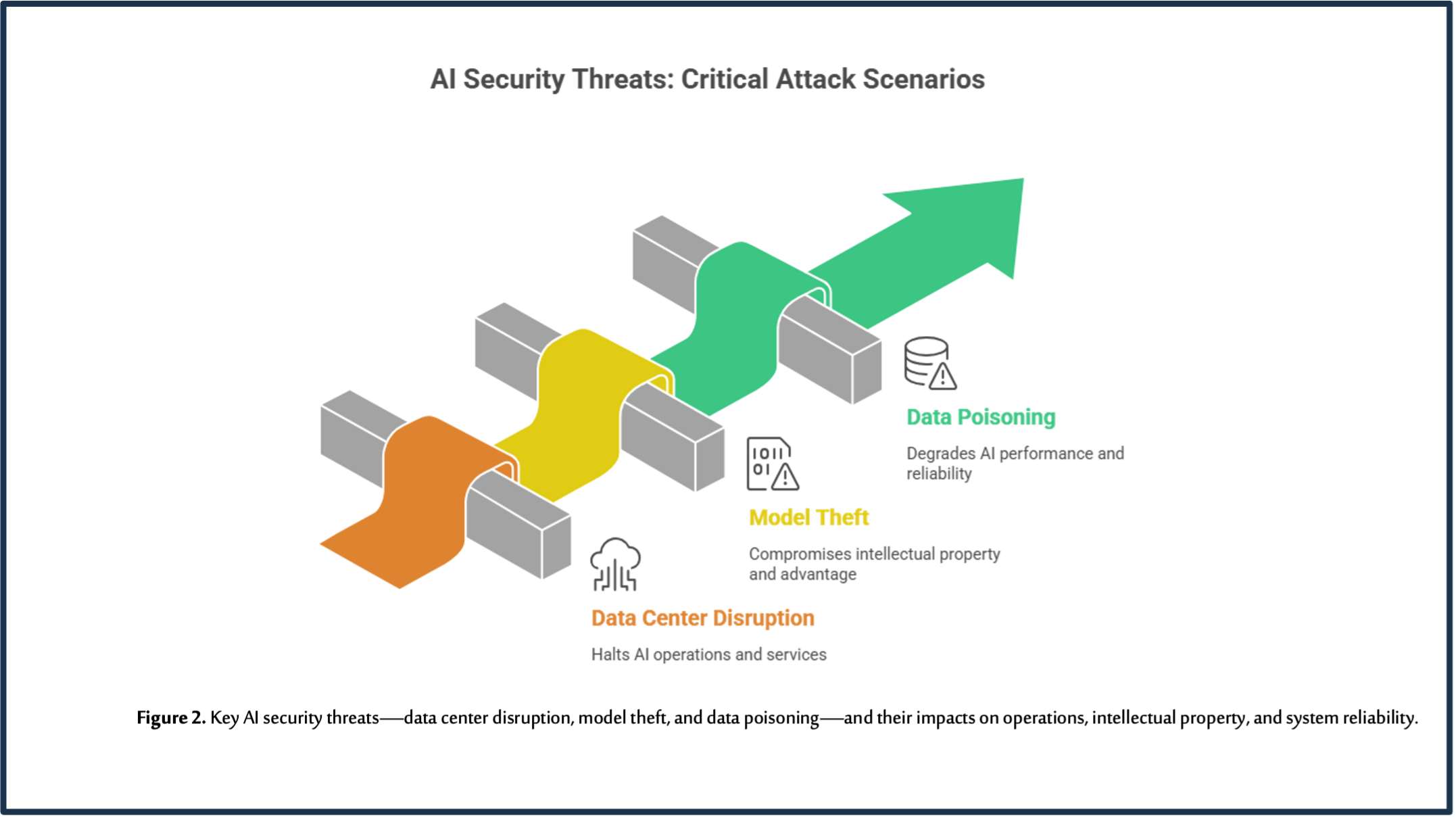

A breach in an AI environment can have cascading effects that spread across the entire digital ecosystem. AI data centers face several key attack scenarios that can significantly impact operational processes, intellectual property, and system reliability.

As illustrated in Figure 2, these attacks typically occur along three main paths:

- Data poisoning

- Model theft

- Data center disruption

Each of these paths can lead to widespread operational and strategic repercussions that extend across multiple sectors increasingly reliant on AI-powered systems.

4.1 First Scenario: Disruption of a Major AI Data Center

Consider a scenario in which a regional AI data center, crucial for essential services in finance, healthcare, and logistics across multiple nations, becomes the target of a coordinated cyberattack. Here, a state-sponsored threat actor identifies a vulnerable remote access point within the building management system, which governs the center’s power and cooling infrastructure.

Following system access, the attackers implement subtle yet intentional alterations to the thermal control settings, thereby intensifying the thermal strain on the computing infrastructure. Concurrently, they initiate a large-scale distributed denial-of-service (DDoS) attack against AI service endpoints, with the aim of overwhelming and disorienting the cyber defense teams.

As cooling systems degrade, automated safeguards initiate a reduction in the operational capacity of GPU-based computing clusters, ultimately leading to their shutdown to safeguard essential infrastructure. The repercussions of this disruption rapidly propagate throughout the sectors dependent on these services. Consequently, financial sector fraud detection systems experience deceleration, electronic payment gateways become inoperative, logistics systems fail to refresh transport routes, and certain hospitals lose access to AI-driven triage tools. [45]

Although recovery protocols and the relocation of operations to alternative data centers in different geographical locations are available, the capabilities of these centers are constrained by limitations in both capacity and latency. This necessitates that operators curtail certain non-essential computing loads to ensure the prioritization of critical services.

The event triggers market fluctuations and increased public concern, forcing regulators to closely examine the vulnerability of AI infrastructure to potential breaches. Once normalcy returns, however, the service provider’s reputation suffers. Consequently, authorities insist on more stringent classification and protection measures for AI data centers, designating them as critical infrastructure. [46] This situation underscores the far-reaching, international consequences of attacks aimed at specialized AI infrastructure.

4.2 Second Scenario: Theft of a Strategic AI Model

In another scenario, an advanced and persistent threat (APT) group targets an AI data center hosting an advanced model used in commercial applications as well as in sensitive national security tasks. The attack begins with a targeted phishing campaign, coupled with the exploitation of a supply chain vulnerability. This allows the attackers to gain system credentials and exploit an unpatched vulnerability in an internal control panel for managing the coordination of computing processes. [47]

Once inside the system, the attackers move horizontally within the network until they can locate storage units containing the encrypted model checkpoints and fine-tuning datasets.

Over several weeks, the attackers gradually extract parts of the model and conceal them within normal data traffic before later reassembling the entire model within their own technical environment. This access gives them the ability to accelerate the development of AI technologies locally, as well as to create advanced attack tools, such as automated vulnerability detection systems, advanced phishing campaigns, or support information influence operations. [48]

This type of theft represents a dual threat; it undermines the global competitive advantage of the original model developer and creates new challenges for export control policies for advanced technologies and governance frameworks associated with high-capacity models.

If attackers were to implant sophisticated backdoors within the production model, it could lead to the systematic distortion of decisions and analyses based on it, potentially impacting financial markets or security assessments. This scenario underscores the urgent need to strengthen model security, monitoring mechanisms, and access management within AI data centers.

4.3 Third Scenario: Large‑Scale Data Poisoning

In a third scenario, a criminal organization conducts a long-term data poisoning operation, specifically targeting the training data used by fraud and anomaly detection models in a major AI data center. [49]

The attackers gain access to external data providers and exploit weaknesses in the verification processes at data entry points. They then insert carefully crafted malicious samples into financial transaction logs and industrial measurement data.

Based on research showing that even small amounts of contaminated data can significantly affect a model’s performance, the attackers create training samples that gradually shift the model’s decision boundaries. As a result, certain patterns of money laundering or industrial disruption are misclassified as normal or safe activities.

Given that the model’s performance metrics are generally within acceptable parameters, operators are initially without any obvious cause for concern regarding a security breach. Subsequently, the criminal organization capitalizes on these system vulnerabilities to enable money laundering or execute clandestine industrial sabotage, all while maintaining a low likelihood of being detected. The attack is only identified following a significant incident, and a thorough forensic investigation subsequently uncovers evidence of the poisoning within archived datasets and processing line logs.

The remediation process requires rebuilding datasets, retraining models, and reviewing data governance practices, resulting in high operational costs and service disruptions. [50]

This scenario highlights the importance of developing advanced data engineering practices, data provenance controls, and data processing line security in AI data centers, going beyond traditional defense models based on protecting network boundaries.

5. Strategic Protection Framework: Cyber Shield for AI Infrastructure (CSFAII)

5.1 The concept of a cyber shield for artificial intelligence (CSFAII)

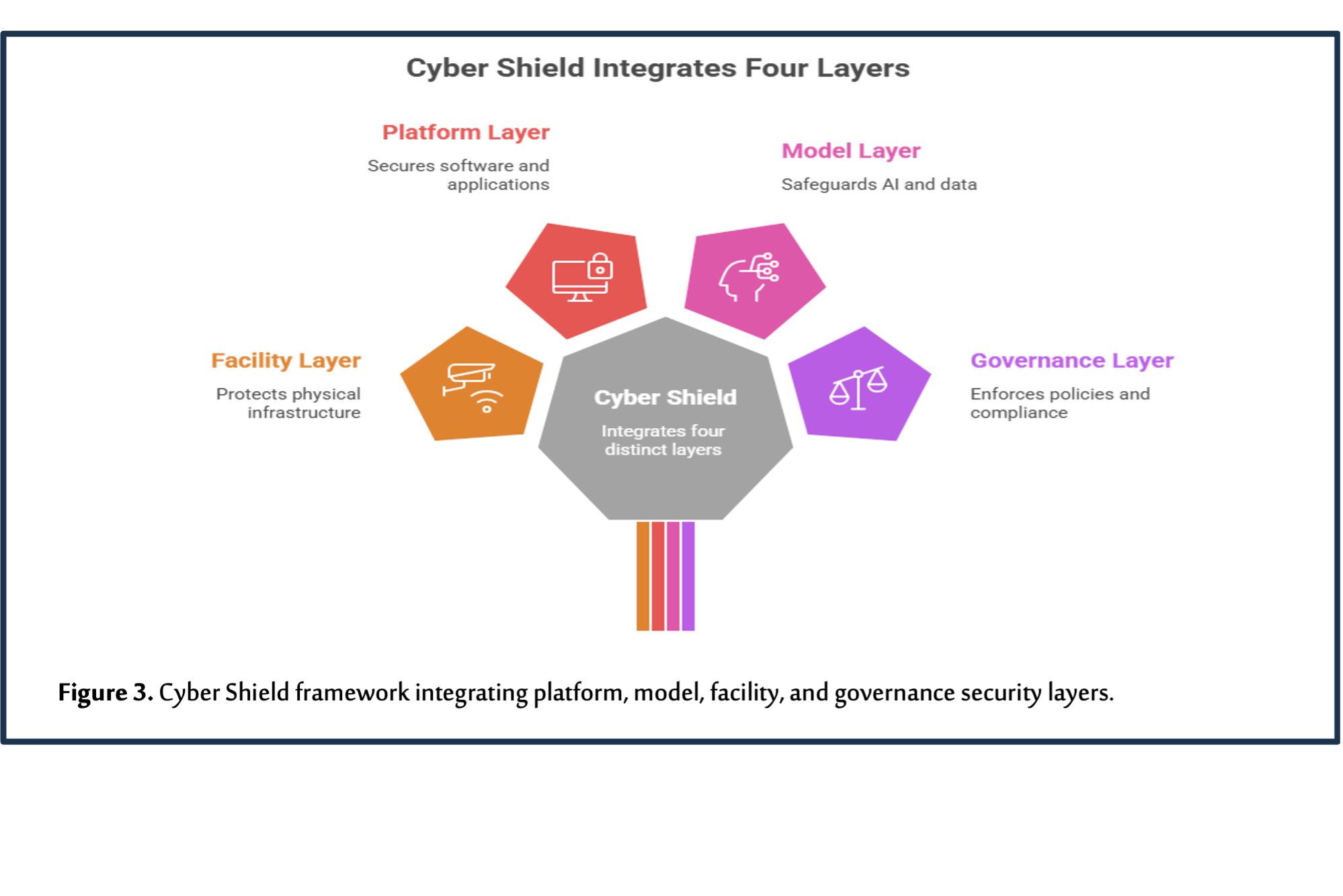

This research proposes a strategic protection framework known as the AI Infrastructure Cyber Shield. This multi-layered framework treats AI data centers as part of critical infrastructure, requiring the integration of physical and cyber protection measures and corporate governance frameworks. [51]

As illustrated in Figure 3, this framework is based on four main security layers that form an integrated defense system for AI infrastructure:

1. Platform Layer: This includes protecting cloud computing systems, cluster management frameworks, and coordination and resource management tools. 2. Model Layer: This encompasses safeguarding the fundamental models, managing access to training weights and data, and implementing methods to identify tampering or hidden vulnerabilities. 3. Facility Layer: This layer includes the security of power and cooling systems, building management, and the physical infrastructure found in data centers. 4. Governance Layer: This layer involves regulatory frameworks, risk management, and the collaboration between governments and technology companies. Its goal is to ensure the security and long-term viability of AI infrastructure.

By combining these four layers, the AI Cyber Shield framework aims to create a complete defense system. This system is designed to reduce systemic risks and improve the resilience of AI data centers against advanced cyber threats.

The Cyber Shield Framework for AI Infrastructure:

The AI Infrastructure Cyber Shield Framework comprises four interrelated security layers that constitute a cohesive defense system:

- Physical Infrastructure Layer: This layer encompasses physical security measures for data centers, guaranteeing the continuity of power and cooling systems, safeguarding industrial operating technology (OT) and industrial control systems (ICS) networks, and enforcing stringent separation protocols between management and control networks and user or tenant networks.

- Platform Layer: This layer emphasizes the protection of cloud computing infrastructure and operational platforms through the implementation of zero-trust models, the enhancement of identity and access management systems, the facilitation of continuous system monitoring, and the utilization of AI-driven threat detection technologies specifically tailored for monitoring AI-related workloads.

- Model and Data Layer: This layer necessitates the implementation of rigorous access controls for models, the application of encryption technologies, the establishment of model identification mechanisms (e.g., digital tagging or fingerprinting), the governance of data and its provenance, and the development of defensive mechanisms designed to prevent or detect data poisoning attacks within processing lines.

Furthermore, the governance layer entails the formal recognition of AI data centers as critical infrastructure. This involves bolstering regulatory scrutiny, mandating incident reporting, encouraging collaborations between public and private sectors, and setting international benchmarks to mitigate risks to AI infrastructure. The AI Cyber Shield architecture aims to move away from fragmented, provider-dependent security methods. Instead, it proposes a comprehensive, strategic approach to protect AI infrastructure by integrating these four layers.

5.2 Shield ships

As cloud computing and AI services transcend national borders, protecting AI data centers relies heavily on collaboration between governments and the private sector.

Public-private partnerships can contribute to:

- Joint incident response planning

- Threat intelligence sharing

- Conducting simulated attacks targeting AI infrastructure [52]

Industry Intelligence Exchange and Analysis Centers (ISACs) and AI security alliances play a crucial role in pooling expertise and disseminating best practices across various industry stakeholders. [53]

Industry associations and standards-setting bodies are pivotal in developing core standards for security controls, certification mechanisms, and audit frameworks for AI data centers, aligned with cloud computing security standards, AI risk management, and critical infrastructure protection. [54]

These shared frameworks help reduce regulatory fragmentation, clarify expectations for operators and customers, and enable regulators to more effectively assess compliance and systemic risk.

6. Discussion

The evidence presented in this study indicates that AI data centers have become a pivotal element of the contemporary socio-technical architecture. They are simultaneously an engine of economic activity, a pillar of national security, and a critical infrastructure for vital digital services.

From a critical infrastructure theory perspective, these centers meet essential criteria for critical infrastructure, including strategic importance, irreplaceability, and the potential for cascading effects in the event of failure.

From an AI security perspective, these centers concentrate risks related to models and data that traditional IT security approaches cannot fully address.

From a digital geopolitics perspective, competition for AI infrastructure has become a significant factor in the balance of power between states and corporations. States that host or control large AI data centers can exert influence through setting technical standards, controlling market access, or engaging in security cooperation. Conversely, states that rely on foreign cloud services may face risks related to external control or potential service disruptions. [55] These dynamics are linked to ongoing debates about digital sovereignty and strategic independence, particularly for regions seeking to develop sovereign AI capabilities.

In this context, the Cyber Shield AI Infrastructure framework offers a practical approach to addressing these challenges. [56] Instead of viewing data center security as solely a technical issue for service providers, the framework presents a vision that considers it a shared strategic responsibility requiring coordination across layers of facilities, platforms, models, and governance mechanisms.

7. Conclusion

AI data centers are becoming high-value targets in the contemporary cyber threat landscape due to their massive concentration of computing power, proprietary models, and sensitive data, as well as their pivotal role in supporting critical services across multiple sectors.

Contemporary discourse surrounding AI governance predominantly emphasizes model behavior and ethical implications of applications, often neglecting the fundamental vulnerabilities inherent in the infrastructure that supports these systems. This research advocates for the formal designation of AI data centers as critical infrastructure, proposing their protection via a multi-layered framework: the AI Infrastructure Cyber Shield. This shield encompasses secure facility design, agile platform architecture, safeguarding of models and data, and coordinated governance mechanisms.

Through an examination of realistic threat vectors and potential attack scenarios, such as operational disruption, model theft, and data poisoning, the study underscores the systemic risks linked to breaches of AI infrastructure.

Securing the physical and digital underpinnings of artificial intelligence transcends a purely technical hurdle; it constitutes a strategic necessity. Given AI’s escalating integration within economic, military, and societal frameworks, the safeguarding of the infrastructure that undergirds these functionalities emerges as a pivotal component of global security, resilience, and governance within the digital epoch.

8. Policy Recommendations

8.1 Classifying AI Data Centers as Critical Infrastructure

Policymakers need to revise the definitions of critical infrastructure. AI data centers and their computing clusters should be explicitly included. [57] This classification should encompass both direct and indirect dependencies. For instance, the models that bolster the security of other infrastructure are also affected.

Once these facilities are designated as critical infrastructure, they’ll need to meet specific requirements:

- Mandatory risk assessments and interdependency mapping.

- Basic security and operational resilience measures, reflecting their strategic significance, must be enforced.

- Participation in national cybersecurity exercises and the sharing of incident information with pertinent authorities are also essential. These steps will ensure that AI infrastructure receives the same level of attention as energy, telecommunications, and financial market infrastructure.

8.2 Developing International Security Standards

National initiatives should be strengthened through international security standards specifically designed for AI data centers. Multilateral organizations and technical bodies can collaborate with industry to develop guidelines that include:

- Secure facility design

- Secure operational processes

- Protection of models and data

- Ensuring the integrity of supply chains

These standards should be based on lessons learned from AI risk management frameworks, cloud computing security best practices, and critical infrastructure protection guidelines. [58]

Unified standards also help reduce regulatory gaps, facilitate cross-border mutual recognition of certifications and audit results, and support efforts to control the export of sensitive technologies and ensure the integrity of high-capacity models. [59]

8.3 Building Strategic Cyber Partnerships

Governments and businesses should build strategic cyber partnerships specifically focused on protecting AI infrastructure. Regional alliances facilitate the coordination of monitoring and incident response protocols concerning data centers that serve multiple nations, enabling the sharing of near-real-time measurement data and threat intelligence.

Furthermore, collaborative research and development initiatives can investigate the application of artificial intelligence (AI) in safeguarding AI systems. This includes the development of self-response tools designed to counteract attacks, sophisticated anomaly detection systems tailored for industrial operating environments, and technologies aimed at identifying model compromise or data poisoning. [60]

Consequently, these partnerships contribute to mitigating security capability deficiencies, especially for nations and small enterprises that depend on global AI services but possess limited advanced security resources.

By treating AI infrastructure as a shared strategic asset, these initiatives can enhance collective resilience and reduce incentives to carry out attacks that could disrupt the global digital order.

References

- Hostettler, Silvia, Samira Najih Besson, and Jean-Claude Bolay. 2018. Technologies for Development. https://doi.org/10.1007/978-3-319-91068-0.

- Dwivedi, Yogesh K., Laurie Hughes, Elvira Ismagilova, Gert Aarts, Crispin Coombs, Tom Crick, Yanqing Duan, et al. 2019. “Artificial Intelligence (AI): Multidisciplinary Perspectives on Emerging Challenges, Opportunities, and Agenda for Research, Practice and Policy.” International Journal of Information Management 57 (August): 101994. https://doi.org/10.1016/j.ijinfomgt.2019.08.002.

- Florido-Benítez, Lázaro. 2024. “The Types of Hackers and Cyberattacks in the Aviation Industry.” Journal of Transportation Security 17 (1). https://doi.org/10.1007/s12198-024-00281-9.

- Ajibade, Olusola, M Faruk, F Plabon, U Saha, M Hossain, G Osho, J Omisola, et al. 2025. “AI-Powered Project Control Dashboards for Proactive Cybersecurity Event Response and Strategic Decision Support in Critical Infrastructure Programs.” International Journal of Computer Applications Technology and Research, August. https://doi.org/10.7753/ijcatr1408.1007.

- Zhou, Zhi, Xu Chen, En Li, Liekang Zeng, Ke Luo, and Junshan Zhang. 2019. “Edge Intelligence: Paving the Last Mile of Artificial Intelligence With Edge Computing.” Proceedings of the IEEE 107 (8): 1738–62. https://doi.org/10.1109/jproc.2019.2918951.

- Buck, Christoph, Eileen Doctor, Jasmin Hennrich, Jan Jöhnk, and Torsten Eymann. 2022. “General Practitioners’ Attitudes Toward Artificial Intelligence–Enabled Systems: Interview Study.” Journal of Medical Internet Research 24 (1): e28916. https://doi.org/10.2196/28916.

- Capra, Maurizio, Beatrice Bussolino, Alberto Marchisio, Guido Masera, Maurizio Martina, and Muhammad Shafique. 2020. “Hardware and Software Optimizations for Accelerating Deep Neural Networks: Survey of Current Trends, Challenges, and the Road Ahead.” IEEE Access 8 (January): 225134–80. https://doi.org/10.1109/access.2020.3039858.

- Haridas, Anoop. 2018. “KOLAM : Human Computer Interfaces Fro Visual Analytics in Big Data Imagery.” https://doi.org/10.32469/10355/68900.

- ———. 2019b. “Artificial Intelligence (AI): Multidisciplinary Perspectives on Emerging Challenges, Opportunities, and Agenda for Research, Practice and Policy.” International Journal of Information Management 57 (August): 101994. https://doi.org/10.1016/j.ijinfomgt.2019.08.002.

- Yang, Jun, Wenjing Xiao, Chun Jiang, M. Shamim Hossain, Ghulam Muhammad, and Syed Umar Amin. 2018. “AI-Powered Green Cloud and Data Center.” IEEE Access 7 (December): 4195–4203. https://doi.org/10.1109/access.2018.2888976.

- Bokhari, Syed Asad Abbas, and Seunghwan Myeong. 2023. “The Influence of Artificial Intelligence on E-Governance and Cybersecurity in Smart Cities: A Stakeholder’s Perspective.” IEEE Access 11 (January): 69783–97. https://doi.org/10.1109/access.2023.3293480.

- Williamson, Hugh F., and Sabina Leonelli. 2022. Towards Responsible Plant Data Linkage: Data Challenges for Agricultural Research and Development. https://doi.org/10.1007/978-3-031-13276-6.

- Rasheed, Adil, Omer San, and Trond Kvamsdal. 2020. “Digital Twin: Values, Challenges and Enablers From a Modeling Perspective.” IEEE Access 8 (January): 21980–12. https://doi.org/10.1109/access.2020.2970143.

- Parker, Sharon K., and Gudela Grote. 2019. “Automation, Algorithms, and Beyond: Why Work Design Matters More Than Ever in a Digital World.” Applied Psychology 71 (4): 1171–1204. https://doi.org/10.1111/apps.12241.

- D, Harichandana. 2025. “Digital Payement Adoption in Emerging Markets.” International Journal on Science and Technology 16 (3). https://doi.org/10.71097/ijsat.v16.i3.7770.

- Ranaweera, Pasika, Anca Delia Jurcut, and Madhusanka Liyanage. 2021. “Survey on Multi-Access Edge Computing Security and Privacy.” IEEE Communications Surveys & Tutorials 23 (2): 1078–1124. https://doi.org/10.1109/comst.2021.3062546.

- Almalki, Jameel. 2023. “State-of-the-Art Research in Blockchain of Things for HealthCare.” Arabian Journal for Science and Engineering 49 (3): 3163–91. https://doi.org/10.1007/s13369-023-07896-5.

- Kianpour, Mazaher, and Shahid Raza. 2024. “More Than Malware: Unmasking the Hidden Risk of Cybersecurity Regulations.” International Cybersecurity Law Review 5 (1): 169–212. https://doi.org/10.1365/s43439-024-00111-7.

- Gill, Sukhpal Singh, Huaming Wu, Panos Patros, Carlo Ottaviani, Priyansh Arora, Victor Casamayor Pujol, David Haunschild, et al. 2024. “Modern Computing: Vision and Challenges.” Telematics and Informatics Reports 13 (January): 100116. https://doi.org/10.1016/j.teler.2024.100116.

- Batayneh, Rasha M. Al, Nasser Taleb, Raed A. Said, Muhammad Turki Alshurideh, Taher M. Ghazal, and Haitham M. Alzoubi. 2021. “IT Governance Framework and Smart Services Integration for Future Development of Dubai Infrastructure Utilizing AI and Big Data, Its Reflection on the Citizens Standard of Living.” In Advances in Intelligent Systems and Computing, 235–47. https://doi.org/10.1007/978-3-030-76346-6_22.

- Pietroni, Eva. 2025. “Multisensory Museums, Hybrid Realities, Narration, and Technological Innovation: A Discussion Around New Perspectives in Experience Design and Sense of Authenticity.” Heritage 8 (4): 130. https://doi.org/10.3390/heritage8040130.

- Brunetti, Federico, Dominik T. Matt, Angelo Bonfanti, Alberto De Longhi, Giulio Pedrini, and Guido Orzes. 2020. “Digital Transformation Challenges: Strategies Emerging From a Multi-stakeholder Approach.” The TQM Journal 32 (4): 697–724. https://doi.org/10.1108/tqm-12-2019-0309.

- Allam, Zaheer, Ayyoob Sharifi, Simon Elias Bibri, David Sydney Jones, and John Krogstie. 2022. “The Metaverse as a Virtual Form of Smart Cities: Opportunities and Challenges for Environmental, Economic, and Social Sustainability in Urban Futures.” Smart Cities 5 (3): 771–801. https://doi.org/10.3390/smartcities5030040.

- Saxena, Neetesh, Emma Hayes, Elisa Bertino, Patrick Ojo, Kim-Kwang Raymond Choo, and Pete Burnap. 2020. “Impact and Key Challenges of Insider Threats on Organizations and Critical Businesses.” Electronics 9 (9): 1460. https://doi.org/10.3390/electronics9091460.

- Jackson, Ilya, Dmitry Ivanov, Alexandre Dolgui, and Jafar Namdar. 2024. “Generative Artificial Intelligence in Supply Chain and Operations Management: A Capability-based Framework for Analysis and Implementation.” International Journal of Production Research 62 (17): 6120–45. https://doi.org/10.1080/00207543.2024.2309309.

- Ibrahim, Abdulkadir Abdullahi, Wilson Cheruiyot, and Michael W. Kimwele. 2017. “Data Security in Cloud Computing With Elliptic Curve Cryptography.” Global Society of Scientific Research and Researchers – International Journal of Computer26 (1): 1–14. http://ijcjournal.org/index.php/InternationalJournalOfComputer/article/view/998.

- Hole, K.J., A.N. Klingsheim, L.-h. Netland, Y. Espelid, T. Tjostheim, and V. Moen. 2009. “Risk Assessment of a National Security Infrastructure.” IEEE Security & Privacy 7 (1): 34–41. https://doi.org/10.1109/msp.2009.17.

- Baduge, Shanaka Kristombu, Sadeep Thilakarathna, Jude Shalitha Perera, Mehrdad Arashpour, Pejman Sharafi, Bertrand Teodosio, Ankit Shringi, and Priyan Mendis. 2022. “Artificial Intelligence and Smart Vision for Building and Construction 4.0: Machine and Deep Learning Methods and Applications.” Automation in Construction 141 (June): 104440. https://doi.org/10.1016/j.autcon.2022.104440.

- Kopp, Emanuel, Lincoln Kaffenberger, and Christopher Wilson. 2017. “Cyber Risk, Market Failures, and Financial Stability.” IMF Working Paper 17 (185). https://doi.org/10.5089/9781484313787.001.

- Leung, Jade. 2019. “Who Will Govern Artificial Intelligence? Learning From the History of Strategic Politics in Emerging Technologies.” https://doi.org/10.5287/ora-wxrmo8pk4.

- Piers, Maud, Sean McCarthy, Nino Sievi, Viola Donzelli, Cemre Ç. Kadıoğlu Kumtepe, Matthias Lehmann, Crenguta Leaua, et al. 2025. Transforming Arbitration. Exploring the Impact of AI, Blockchain, Metaverse and Web3. Radboud University Press eBooks. https://doi.org/10.54195/fmvv7173.

- Chava, Karthik. 2023. “Integrating AI and Big Data in Healthcare: A Scalable Approach to Personalized Medicine.” Journal of Survey in Fisheries Sciences, January. https://doi.org/10.53555/sfs.v10i3.3576.

- Varga, Pal, Jozsef Peto, Attila Franko, David Balla, David Haja, Ferenc Janky, Gabor Soos, Daniel Ficzere, Markosz Maliosz, and Laszlo Toka. 2020. “5G Support for Industrial IoT Applications— Challenges, Solutions, and Research Gaps.” Sensors 20 (3): 828. https://doi.org/10.3390/s20030828.

- Theodorakopoulos, Leonidas, Alexandra Theodoropoulou, and Christos Klavdianos. 2025. “Interactive Viral Marketing Through Big Data Analytics, Influencer Networks, AI Integration, and Ethical Dimensions.” Journal of Theoretical and Applied Electronic Commerce Research 20 (2): 115. https://doi.org/10.3390/jtaer20020115.

- Pham, Quoc-Viet, Fang Fang, Vu Nguyen Ha, Md. Jalil Piran, Mai Le, Long Bao Le, Won-Joo Hwang, and Zhiguo Ding. 2020. “A Survey of Multi-Access Edge Computing in 5G and Beyond: Fundamentals, Technology Integration, and State-of-the-Art.” IEEE Access 8 (January): 116974–17. https://doi.org/10.1109/access.2020.3001277.

- Ginzburg-Ganz, Elinor, Pavel Lifshits, Ram Machlev, Juri Belikov, Ziv Krieger, and Yoash Levron. 2025. “Technical Challenges of AI Data Center Integration Into Power Grids—A Survey.” Energies 19 (1): 137. https://doi.org/10.3390/en19010137.

- ———. 2025b. “Interactive Viral Marketing Through Big Data Analytics, Influencer Networks, AI Integration, and Ethical Dimensions.” Journal of Theoretical and Applied Electronic Commerce Research 20 (2): 115. https://doi.org/10.3390/jtaer20020115.

- Sun, Gan, Yang Cong, Jiahua Dong, Qiang Wang, Lingjuan Lyu, and Ji Liu. 2021. “Data Poisoning Attacks on Federated Machine Learning.” IEEE Internet of Things Journal 9 (13): 11365–75. https://doi.org/10.1109/jiot.2021.3128646.

- Ndibe, Ogochukwu Susan. 2025. “AI-Driven Forensic Systems for Real-Time Anomaly Detection and Threat Mitigation in Cybersecurity Infrastructures.” International Journal of Research Publication and Reviews 6 (5): 389–411. https://doi.org/10.55248/gengpi.6.0525.1991.

- Perwej, Dr.Yusuf, Syed Qamar Abbas, Jai Pratap Dixit, Nikhat Akhtar, and Anurag Kumar Jaiswal. 2021. “A Systematic Literature Review on the Cyber Security.” International Journal of Scientific Research and Management (IJSRM) 9 (12): 669–710. https://doi.org/10.18535/ijsrm/v9i12.ec04.

- Makrakis, Georgios Michail, Constantinos Kolias, Georgios Kambourakis, Craig Rieger, and Jacob Benjamin. 2021. “Industrial and Critical Infrastructure Security: Technical Analysis of Real-Life Security Incidents.” IEEE Access 9 (January): 165295–325. https://doi.org/10.1109/access.2021.3133348.

- Jimmy, Fnu. 2023. “Understanding Ransomware Attacks: Trends and Prevention Strategies.” Journal of Knowledge Learning and Science Technology ISSN 2959-6386 (Online) 2 (1): 180–210. https://doi.org/10.60087/jklst.vol2.n1.p214.

- Gupta, Maanak, Charankumar Akiri, Kshitiz Aryal, Eli Parker, and Lopamudra Praharaj. 2023. “From ChatGPT to ThreatGPT: Impact of Generative AI in Cybersecurity and Privacy.” IEEE Access 11 (January): 80218–45. https://doi.org/10.1109/access.2023.3300381.

- Umer, Mifta Ahmed, Elefelious Getachew Belay, and Luis Borges Gouveia. 2024. “Leveraging Artificial Intelligence and Provenance Blockchain Framework to Mitigate Risks in Cloud Manufacturing in Industry 4.0.” Electronics 13 (3): 660. https://doi.org/10.3390/electronics13030660.

- Ande, Virinchi, Adegoke Adisa, Micheal Oluwamuyiwa Odunsi, and Qozeem Odeniran. 2025. “AI Driven Approaches for Real Time Fraud Detection in Us Supply Chain Management: Challenges and Opportunities.” Computer Science & IT Research Journal 6 (9): 759–81. https://doi.org/10.51594/csitrj.v6i9.2075.

- Adegbenjo, Samuel. 2025. Assessing the Security Capabilities and Risks of Large Language Models: Implications for Critical Infrastructure and Governance.

- Tan, Zhuoran, Shameem Puthiya Parambath, Christos Anagnostopoulos, Jeremy Singer, and Angelos K. Marnerides. 2025. “Advanced Persistent Threats Based on Supply Chain Vulnerabilities: Challenges, Solutions, and Future Directions.” IEEE Internet of Things Journal 12 (6): 6371–95. https://doi.org/10.1109/jiot.2025.3528744.

- Iturbe, Eider, Oscar Llorente-Vazquez, Angel Rego, Erkuden Rios, and Nerea Toledo. 2024. “Unleashing Offensive Artificial Intelligence: Automated Attack Technique Code Generation.” Computers & Security 147 (August): 104077. https://doi.org/10.1016/j.cose.2024.104077.

- ———. 2025b. “AI-Driven Forensic Systems for Real-Time Anomaly Detection and Threat Mitigation in Cybersecurity Infrastructures.” International Journal of Research Publication and Reviews 6 (5): 389–411. https://doi.org/10.55248/gengpi.6.0525.1991.

- Adebayo, Motunrayo. 2025. “Unlearning in AI: Techniques and Frameworks for Data Deletion in Pretrained Models Under Legal and Ethical Constraints.” International Journal of Latest Technology in Engineering Management & Applied Science 14 (8): 841–63. https://doi.org/10.51583/ijltemas.2025.1408000109.

- “CYBERSHIELD: A Competitive Simulation Environment for Training AI in Cybersecurity.” 2024. IEEE Conference Publication | IEEE Xplore. September 2, 2024. https://ieeexplore.ieee.org/document/10710208.

- Sharma, Mohit, Sajud E., Naranjan Goklani, and Krishna Chaubey. 2025. “Public-Private Partnerships in Cybersecurity: A Strategic Approach to National Threat Management.” Journal of Electrical Systems 21 (1): 974–988.

- Chang, Kaiju, and Hsini Huang. 2023. “Exploring the Management of Multi-sectoral Cybersecurity Information-sharing Networks.” Government Information Quarterly 40 (4): 101870. https://doi.org/10.1016/j.giq.2023.101870.

- Bhat, Showkat Ahmad, and Nen-Fu Huang. 2021. “Big Data and AI Revolution in Precision Agriculture: Survey and Challenges.” IEEE Access 9 (January): 110209–22. https://doi.org/10.1109/access.2021.3102227.

- Paz, Bárbara Jennifer. 2020. “Kai-Fu-Lee (2019): AI Superpowers—China, Silicon Valley and the New World Order.” AI & Society 35 (3): 771–72. https://doi.org/10.1007/s00146-020-00991-3.

- Algarni, Abdullah M., and Vijey Thayananthan. 2025. “Cybersecurity for Analyzing Artificial Intelligence (AI)-Based Assistive Technology and Systems in Digital Health.” Systems 13 (6): 439. https://doi.org/10.3390/systems13060439.

- Pham, Tuan. 2025. “Ethical and Legal Considerations in Healthcare AI: Innovation and Policy for Safe and Fair Use.” Royal Society Open Science 12 (5): 241873. https://doi.org/10.1098/rsos.241873

- ———. 2019f. “Artificial Intelligence (AI): Multidisciplinary Perspectives on Emerging Challenges, Opportunities, and Agenda for Research, Practice and Policy.” International Journal of Information Management 57 (August): 101994. https://doi.org/10.1016/j.ijinfomgt.2019.08.002.

- Schmidt, Julia, and Walter Steingress. 2022. “No Double Standards: Quantifying the Impact of Standard Harmonization on Trade.” Journal of International Economics 137 (April): 103619. https://doi.org/10.1016/j.jinteco.2022.103619.

- Tabassi, Elham. 2023. “Artificial Intelligence Risk Management Framework (AI RMF 1.0).” https://doi.org/10.6028/nist.ai.100-